Public Safety Canada Internal Audit of Performance Measurement

March 16, 2016

Table of contents

- Executive Summary

- 1 Introduction

- 2 Findings, Recommendations and management responses

- Annex A: Internal Audit and Evaluation Directorate Opinion Scale

- Annex B: Status of PMS Development by Branch

- Annex C: Audit Criteria

- Annex D: Preliminary Audit Risks

- Annex E: Summary of 2013 State of Annual Performance Measurement

Executive Summary

Background

Performance measurement is defined as process and systems of selection, development and on-going use of performance measures to guide decision-making.Footnote1 Performance measurement is a results-based management tool that federal organizations are required to use to strengthen decision-making, program improvement and reporting; and is becoming increasingly important in an era of open government and accountability.

Considering the importance of performance measurement, the Deputy Minister approved the Audit of Performance Measurement as part of the 2012-13 – 2014-15 Risk-Based Audit Plan.

Audit Objective and Scope

The objective of this audit was to provide reasonable assurance that the departmental performance measurement processes and practices were adequate, effective, and aligned with Treasury Board requirements.

The scope focused on the way performance indicators and measures were developed and how the data was collected and used by the Department. The audit also focused on conformance with the TB and departmental policies, and the departmental audit and evaluation committee charters.

The audit sampled performance measurement information within all departmental activities including Grants and Contributions programs, policy and research activities, and internal support functions up until September 2015.

Summary of Findings

As per the TB and Public Safety (PS) policies and guidelines, a comprehensive performance measurement process includes:

- Formally defined and communicated policies and guidelines, and available training;

- Clear roles, responsibilities, accountabilities and oversight;

- Clear understanding of the procedures and practices for the development or amendment, and approval of performance measurement framework (PMF);

- Clear understanding of the procedures and practices for the development and approval of performance measurement strategies (PMS);

- PMF, PMS and other business planning alignment;

- Data collection (identified data collection techniques, sources of data, accurate and timely reporting); and

- Performance measurement used for reporting needs and sound decision-making.

Through the audit, we found that the Department has some fundamental controls and processes in place. These include:

- access to a sufficient policy suite providing clear roles, responsibilities and accountabilities;

- consultations with key partners and stakeholders during the development of the departmental PMF and program-level PMSs;

- improved indicators and targets in the development of the PMF;

- better integration of PMF into the business planning cycle, and

- good understanding of what is involved in preparing a comprehensive PMS with only two of 28 outstanding PMSs.

Based on the documentation review, interviews and sample tests and analysis, we found that PS performance measurement processes are incomplete:

- Although there was sufficient policies and guidance to ensure clear roles and responsibilities, we found that roles and responsibilities between the Strategic Planning Division and the Evaluation Unit, to review and to advise on PMS development, require clarification.

- We found that no process exists to resolve concerns pertaining to the PMF.

- That while committees' roles and responsibilities were clear and well documented, there was inconsistency of performance measurement information presented at various committees.

- While PMF and PMS processes exist and included consultations within Public Safety and with external stakeholders, only a few programs demonstrated a linkage between their PMSs and the PMF.

- Interviewees indicated the need for further guidance and support in terms of developing performance indicators to measure policy activities.

- Limited data is being collected against the indicators in the PMF and PMSs; consequently, there is a small amount of data available to support decision-making and reporting purposes.

- That certain program areas relied on third-party performance information for which data collection and storage was not determined.

Audit Opinion

Improvements are requiredFootnote2 to the performance measurement processes and practices to increase the level of compliance with TB requirements, and their adequacy and effectiveness. There are opportunities to improve the performance measurement processes, and strengthen the collection and use of performance measurement information in support of management and oversight activities.

Statement of Conformance and Assurance

Sufficient and appropriate audit procedures were conducted and evidence gathered to support the accuracy of the opinion provided and contained in this report. The opinion is based on a comparison of the conditions, as they existed at the time, against pre-established audit criteria that were agreed upon with management. The opinion is applicable only to the entity examined and within the scope described herein. The evidence was gathered in compliance with the Treasury Board Policy and Directive on Internal Audit. The audit conforms to the Internal Auditing Standards for the Government of Canada, as supported by the results of the Quality Assurance and Improvement Program. The procedures used meet the professional standards of the Institute of Internal Auditors. The evidence gathered is sufficient to provide Senior Management with proof of the opinion derived from the internal audit.

Recommendations

- Assistant Deputy Minister of PACB, should ensure that:

- Alignment between indicators in the PMF, the RPP, and the DPR

- The Chief Audit and Evaluation Executive is supported to review and advise on the PMF in accordance with the TBS Directive on the Evaluation Function.

- Each Assistant Deputy Minister should:

- Identify resources assigned to development and implementation of PMSs.

- Ensure linkages between the PMF and PMSs.

- Each Assistant Deputy Minister should:

- Ensure collection of accurate data against the PMF and PMSs indicators to support timely decision-making and reporting.

- Develop agreements for third-party data collection, where applicable.

Management Response

Management accepts the recommendations of Internal Audit. The recommendations recognize that the accountability for performance measurement is shared by all Departmental Managers.

The key actions to be taken by management to address the recommendations and findings and their timing can be found in the Findings, Recommendations and Management Response section of the report.

CAE Signature

____________________________

Audit Team Members

Deborah Duhn

Sonja Mitrovic

Kyle Abonasara

Acknowledgements

Internal Audit would like to thank the all those who provided advice and assistance during the audit.

1. Introduction

1.1 Background

Performance measurement is defined as “process and systems of selection, development and on-going use of performance measures to guide decision-making”.Footnote3 Performance measurement is a results-based management tool that federal organizations are required to use to strengthen decision-making and reporting.

More specifically, performance measurement supports Public Safety (PS) in executing its leadership role to bring coherence to the activities of the Departments. It also provides levers to influence policy coherence; and provides insight in terms of horizontal issues with many federal partners and through the delivery of the following program activities: National Security: Borders Strategies; Countering Crime; Emergency Management.

The performance measurement processes ensure that relevant, accurate and timely information is available for sound decision-making and reporting. Ongoing performance measurement forms a strong foundation for results-based management; and is becoming increasingly important in an era of open government and accountability.

Considering the importance of the performance measurement in the Department's activities, the Deputy Minister approved the Audit of Performance Measurement as part of the 2012-13 – 2014-15 Risk-Based Audit Plan.

1.2 Legislative Framework

Performance measurement is defined through a set of Treasury Board (TB) policies, directives, and guidelines. TB policies include the Policy on Management, Resources and Results Structures (MRRS), Policy on Evaluation, and Policy on Transfer Payments. In particular, the Policy on MRRS requires that “the Government and Parliament receive integrated financial and non-financial program performance information for use to support improved allocation and reallocation decisions in individual Departments and across the Government.”Footnote4

1.3 Roles and Responsibilities

The Deputy Minister's, Branch Heads', and Chief Audit and Evaluations Executive's (CAEE) roles and responsibilities in relation to performance measurement are defined in both TB and PS policies which include: the TB Policy of MRRS (2010), the TB and PS Policy on Evaluation (2009), the TBS Directive on the Evaluation Function (2009),and the TB Policy on Transfer Payments (2008). The following is a summary of key responsibilities from select TB and PS policies and directives.

The Deputy Head's main responsibilities are to ensure that:

- “ongoing performance measurement is implemented through the Department so that sufficient performance information is available to effectively support the evaluations of programs.”Footnote5

- “there is a framework to link expected results and performance measures to each program at all levels of the Program Alignment Architecture (PAA) and for which actual results are reported;

- Department's information systems, Performance Measurement Strategies (PMS), reporting, and governance structures are consistent with and support the Department's MRRS.”Footnote6

- “a PMS is established at the time of program design, and that it is maintained and updated throughout its life cycle, to effectively support evaluations or review of relevance and effectiveness of each transfer payment program; and,

- there is cost-effective oversight, internal control, performance measurement, and reporting systems in place to support management of transfer payments.”Footnote7

The Departmental Evaluation Committee (DEC):

- “reviews the adequacy of resources allocated to performance measurement activities as they relate to evaluation, and recommends to the deputy head an adequate level of resources for these activities.” Footnote8

Branch Heads' main responsibilities include:

- “developing and implementing PMS, including maintaining and updating their strategies throughout the life cycle of the program;

- ensuring that credible and reliable performance data are being collected to effectively support evaluations; and,

- submitting for review and advice the accountability and performance provisions to be included in Cabinet documents to the CAEE.”Footnote9

As Head of Evaluation, the CAEE's main responsibilities include:

- “reviewing and providing advice on PMSs for all new and on-going direct program spending to ensure they effectively support an evaluation for relevance and performance;

- reviewing and providing advice on the accountability and performance provisions to be included in cabinet documents;

- reviewing and providing advice on the Performance Measurement Framework (PMF) embedded in the Department's MRRS; and,

- submitting to the DEC an annual report on the state of performance measurement for programs in support of evaluations.”Footnote10

Program Managers' responsibilities include:

- “developing and implementing ongoing performance measurement strategies for their programs, and ensuring that credible and reliable performance data are being collected to effectively support evaluation;

- consulting with the head of evaluation on the performance measurement strategies for all new and ongoing direct program spending.”Footnote11

1.4 Audit Objective

The objective of this audit was to provide reasonable assurance that the systems and practices in place to support performance measurement were adequate, effective, and aligned with Treasury Board requirements.

1.5 Scope and Approach

The scope of the audit focused on the manner in which performance indicators and measures were developed and how the associated performance information was collected and used by the Department. The audit also focused on conformance with the TB Policy on Management, Resources and Results Structures (MRRS), the TB Policy on Transfer Payments, the TB Policy on Evaluation, TBS Directive on the Evaluation Function, the Department's Policy on Evaluation, DEC Charter, planning and reporting cycle, as well as the degree to which performance information was integrated into key decisions.

The audit sampled performance measurement information within all departmental activities including Grants and Contributions programs, policy and research activities, and internal support functions up until September 2015.

Exceptions:

This audit did not provide assurance in regard to:

- The implementation and compliance with the full TB policiesbut rather only those areas specific to performance measurement activities. An example of an area excluded would be the appropriateness of the processes followed for the development of the PAA.

1.6 Risk Assessment

The risk assessment conducted in the planning phase of the audit informed the development of the audit scope and criteria. See Annex C and D for details.

The Department's business activities were distinctly different, ranging from transfer payments programs, to policy development, to operations. This diversity emphasized the need for a strong PMF to clearly understand the results achieved in each activity and align resources accordingly. The nature of some PS activities such as policy development creates challenges in defining performance indicators and data sources.

Program and policy areas also placed significant reliance on external stakeholders' data collection processes, some of which are less established, resulting in a higher degree of inherent risk in relation to data integrity and validity. Further, in some cases the dedication of resources to performance measurement activities competed against other priorities, which may have resulted in incomplete processes.

Many of the Department's activities are horizontal in nature and therefore include the participation of other departments and agencies from within and outside the portfolio. This horizontality added another layer of complexity in coordinating acceptance, commitment, and reporting of performance measurement by all parties involved.

1.7 Audit Opinion

Improvements are requiredFootnote12 to the performance measurement processes and practices to increase the level of compliance with TB requirements, and their adequacy and effectiveness. There are opportunities to improve the performance measurement processes, and strengthen the collection and use of performance measurement information in support of management and oversight activities.

1.8 Statement of Conformance and Assurance

Sufficient and appropriate audit procedures were conducted and evidence gathered to support the accuracy of the opinion provided and contained in this report. The opinion is based on a comparison of the conditions, as they existed at the time, against pre-established audit criteria that were agreed upon with management. The opinion is applicable only to the entity examined and within the scope described herein. The evidence was gathered in compliance with the Treasury Board Policy and Directive on Internal Audit. The audit conforms to the Internal Auditing Standards for the Government of Canada, as supported by the results of the Quality Assurance and Improvement Program. The procedures used meet the professional standards of the Institute of Internal Auditors. The evidence gathered is sufficient to provide Senior Management with proof of the opinion derived from the internal audit.

2. Findings, Recommendations and management responses

2.1 Public Safety Performance Measurement Processes

Through the review of various policies and guidelines pertaining to the management of performance measurement, we identified the following as mandatory items for comprehensive performance measurement processes:

- Formally defined and communicated policies and guidelines, and available training;

- Clear roles, responsibilities, accountabilities and oversight;

- Clear understanding of the procedures and practices for the development or amendment, and approval of performance measurement framework;

- Clear understanding of the procedures and practices for the development and approval of performance measurement strategies, and

- Alignment between PMF, PMS, and other business planning documents.

Public Safety performance measurement processes are incomplete.

Policy, Guidance and Training

Many policies and guidelines exist to provide guidance on performance measurement. Treasury Board approved performance measurement policies and directives include: Policy on MRRS, Policy on Evaluation, Directive on Evaluation Function, and Policy on Transfer Payments. In addition to the external policies, Public Safety has also developed and approved a Policy on Evaluation.

To support departments in the development of performance measurement tools, Treasury Board also issued the Guideline on Performance Measurement Strategy under the Policy on Transfer Payments and the Supporting Effective Evaluations: A Guide to Developing Performance Measurement Strategies. To complement the available guidance and to support program and policy areas, PS has also developed a guide that focuses on the development of logic models, Performance Measurement Strategy Guide – Information on Logic Models.

Although policies and guidelines are available, they mainly focus on performance measurement management of program areas and provide limited guidance for policy activities. The interviewees indicated that there is still a need for further guidance pertaining to the unique role of PS as coordinator and policy department.

To support the implementation and compliance to the listed performance measurement policies, directives and guidelines, specialized Evaluation Programs and general management courses, such as G110, G126, G226, are offered through Canada School of Public Service.

Furthermore, the Strategic Planning Division in PACB has also developed and delivered a number of targeted presentations to various branches and directorates including a series of workshops in 2012. While the attendance to these workshops was limited, 50% of interviewees indicated a need for training and guidance particularly around the development of the indicators for policy function, and tools to capture the required data. Subsequent to the audit, the Evaluation unit developed and delivered a training session on performance measurement indicators for policy activities to address the identified need.

Roles, Responsibilities, Accountabilities and Oversight

Document reviews and interviews indicated that generally there were clear roles, responsibilities and accountabilities and that they were in line with the policies, directives and guidance mentioned above.

The Directive on the Evaluation Function states that the Head of Evaluation is responsible for “reviewing and providing advice on the performance measurement framework embedded in the organization's Management, Resources and Results Structure”.Footnote13 We followed up with TB; they did not provide a clear interpretation as to whether “advice” was to indicate any form of accountability or how the enforcement of this “advice” should be performed. This allowed each individual Department to interpret and to develop its own methodology to ensure its needs were addressed and that it complied with the policy. While the PS and TBS policies had roles and responsibilities concerning “advisory” and “review” of both the PMSs and the PMF, the PS Evaluation Function did not have a formal process pertaining to the review of all PMSs or the annual PMF. To date, the Evaluation Function has not been actively engaged in the PMF review as required by the TBS Directive on Evaluation Function.

In addition to the Evaluation Function advisory and review role, the SPD has also supported the Department in the performance measurement management. As formally documented within their Business Plan, the SPD is committed to “Promoting the stability of the PAA while amending it where necessary”.Footnote14 More specifically, SPD is responsible for:

- Developing a cascading PAA and an inventory of all programs and program measures;

- Ensuring business plan commitments, mitigation strategies and performance actuals are properly monitored and reported on throughout the year;

- Developing a database to collect/store departmental performance information;

- Supporting the branches in the development of their PMSs.

The reviewed 2014-15 Performance Management Agreements for each executive head also indicated that there is management commitment toward performance measurement by including the following criteria:

“Significant progress has been made in achieving the expected results based on the executive's areas of responsibility in the PMF and mitigation strategies are in place to address key risks.”

While the roles, responsibilities, and accountabilities are clearly outlined, interviewees indicated that there has been misinterpretation between the roles of SPD and the Evaluation unit, specifically in the development and monitoring of performance measurement activities.

The TBS Directive on the Evaluation Function required the Head of Evaluation to present an Annual State of Performance Measurement report to the DEC. Between 2009 and 2013 this report was not completed. However, at the June 15, 2010, DEC, performance measurement was discussed, and the Director General (DG), Evaluation tabled “Resourcing the Evaluation Function”. The presentation identified priority activities, and recommended those that could be deferred. The Annual State of Performance Measurement report was identified as one of those activities that could be deferred given it would have limited value because of the known state of performance measurement throughout the Department. DEC accepted the recommendation of prioritization of activities.

The first PS Annual State of Performance Measurement report was presented to the DEC in February 2014 (a summary of findings is available in Annex E). Its purpose was to provide PM information required to move the Department forward in terms of results-based management. Support for results-based management is intended to:

- Provide Ministers with a more cohesive performance story, including an understanding of program efficiency, for informed policy and allocation decisions;

- Promote sound day-to-day program decision-making; and,

- Support the quality and efficiency of evaluation services.

This report was a catalyst for drawing attention to and improving oversight of performance measurement by the DEC. The report recommended that all PMSs had to be approved by the responsible ADM. The development of the report and adoption of the recommendations was a major factor in reinforcing the role of the Evaluation Unit in the coordination of these activities. Subsequent to the audit period, the Evaluation Unit began working with both SPD and the Grants & Contributions (G&C) Centre of Excellence to clarify roles and develop tracking tools and inventory lists.

The oversight governance for performance measurement is composed of various committees at different levels of the organization, namely the DEC, Departmental Audit Committee (DAC), Departmental Management Committee (DMC) and Branch Management Committees.

As per the TB Policy on Evaluation and the PS DEC Charter, the DEC has a number of specific and clearly defined responsibilities concerning PM. More specifically, the DEC is responsible for reviewing “the adequacy of resources allocated to performance measurement activities as they relate to evaluation, and to recommend to the deputy head an adequate level of resources for these activities”.Footnote15 Further to the findings and recommendations outlined in the PS Annual State of Performance Measurement report and subsequent to the audit period, the PS DEC charter was revised to include the additional responsibilities pertaining to “the periodic reviews [of] Assistant Deputy Minister (ADM) approved PMSs, and reviews [of] the departmental PMF annually”.Footnote16

In line with the TB Policy on Internal Audit, through the review of committee records of decisions, we found that DAC received information concerning the MRRS implementation and had reviewed key performance reports such as RPP, DPR and the 2014-15 PMF. However, we also noted that the PS Annual State of Performance Measurement report was not shared with the DAC members.

As per the DMC Terms of Reference, performance information is to be presented at mid-year and year-end for review. Specifically pertaining to the PMF, the DMC is required to review the initial PMF, and then review its results at mid-year and year-end. During the audit period, we found that the PMF was reviewed bilaterally with ADMs instead of DMC as it would have been expected. An ADM meeting was held to discuss the final PMF. Furthermore, due to timing constraints, the 2013-14 PMF mid-year results were not presented at DMC, as they were considered no longer relevant. In 2014-15, data for only a select number of indicators was presented at the mid-year review and year-end discussions. The committee records of decisions indicated further actions required for ADM PACB to review the existing indicators and ensure that the ones chosen to be presented identified issues that required appropriate and timely course corrections.

Finally, we conclude that there is inconsistency in performance information presented and used at the various committees throughout the Department. This may limit the effectiveness of committees.

Procedures and Practices for the Development and Approval of the PMF

A departmental PMF sets out the expected results and the performance measures at the PAA level. The indicators in the PMF are limited in number and focus on supporting departmental monitoring and reporting.

The development of the Departmental PMF has improved with improved indicators, targets and integration into departmental business planning. We found that the PMF was aligned with the TB Guidelines for MRRS; it identified indicators by each sub-sub PAA activity, and it was individually signed off by all ADMs.

The interviewees indicated that the SPD unit conducted a consultative one-on-one approach with individual program areas. These consultations included topics such as TBS requested changes, general PMF development processes, and the implementation of indicators. Although interviewees acknowledged generally good participation during the PMF development consultations, the PMF expectations and use remained unclear. It should be noted that some interviewees indicated that indicators of the PMF were of little value to them because it did not provide them with the information necessary to guide their program.

Although SPD organized meetings with branch coordinators and program managers to facilitate the PMF amendment process, we found no evidence of an established methodology that would support the request for amending the PMF. Interviewees also stated that the Evaluation Function had not been consulted to support its advisory and review role.

TBS provided feedback on the PS PMF, and SPD retained a consultant in 2012-13 to review the PMF. Both TBS and the consultant made observations, most notably that there was:

- Limited amount of instructions or guidance provided in the PMF development process.

- Lack of consistency across the Department in terms of expected results and indicators.

- Outcome statements at the very broad portfolio level with limited direct alignment.

Although SPD agreed with some of the observations, we found no evidence that these observations were communicated to senior management.

While SPD led the PMF development process and ensured compliance to MMRS as well as alignment with the PAA, we found that there is still a need to review the PMF to ensure its clarity and completeness.

Procedures and Practices for the Development and Approval of PMSs

PMSs are used to identify and plan how performance information was to be collected to support ongoing monitoring of a program and its evaluation. They are intended to support effectively both day-by-day program monitoring and delivery, and the eventual evaluation of that program. There are no imposed limits on the number of indicators that can be included, or expected results and outputs; however, their “successful implementation […] is more likely if indicators are kept to a reasonable number”.Footnote17

We found that there was a good understanding of the development of a comprehensive PMS, including Logic Models, stakeholder discussions and indicator identification. More specifically, 10 out of 11 interviewees indicated that they have collaborative discussions with program stakeholders. Program areas have made efforts to ensure awareness and relevancy that are particularly important given our reliance on input from partners.

A judgemental sample of approximately 30% of the total PMSs was assessed to ensure their progress and integrity of their indicators. The results revealed areas for improvement, mainly pertaining to the integrity and the identification of indicators. More specifically, we found:

- a lack of indicators related to the PS leadership and coordination role;

- a weak link between most of the indicators and the outputs and outcomes;

- indicators that generally focused on the activities of the applicants/recipients;

- vague and undefined outcome indicators that may be interpreted differently and present a risk of inconsistent reporting, and,

- lack of resources dedicated to performance measurement collection, analysis and monitoring, with a high number of indicators relying on documentation review, which may raise capacity issues.

Though there are still improvements to be made, the development of PMSs has progressed since the 2013 PS Annual State of Performance Measurement report.

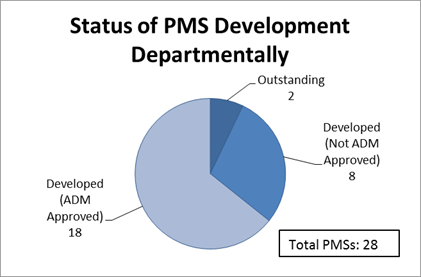

Furthermore, this report also increased senior management's interest by requiring that the responsible ADMs approve PMSs. More specifically, the Deputy Minister requested that those PMSs assessed as “Needs Improvement” be updated and approved by each applicable ADM by September 30, 2014. The following chart presents the status of PMSs as of September 2015.

Figure 1: Status of PMS Development Departmentally

Image Description

This pie chart entitled “Status of PMS Development Departmentally” is divided into 3 sections. The total of the complete pie chart equals 28 PMSs. The largest section represents 18 out of the 28 total PMSs and is labelled “PMS's that are developed and ADM approved”. The second largest section represents 8 out of the 28 total PMSs and is labelled “PMSs that are developed but are not yet ADM approved”. The smallest section represents 2 out of the 28 total PMSs and is labelled “outstanding”.

The two outstanding PMSs are in the policy areas.

PMF, PMS and Other Business Document Alignment

The alignment between PMF, PMSs and other business documents, such as business plans, enables the use of the performance measurement information for sound management.

Through the review of 2014-15 branch business plans, we found that there was alignment with the PMF at the indicators and target levels. This alignment is also brought to the work plans that reflected general commitment to improve performance measurement activities.

Furthermore, interviewees indicated a disconnect between PMF and PMS. Through the documentation review, we found only a few examples of deliberate alignment of the individual PMS indicators to the PMF. Considering the PMF and PMS development processes, further improvements are needed to ensure alignment between the PMF and PMSs.

Although many efforts were made to improve the performance measurement processes within the Department, we found that there are opportunities to improve the development processes and accentuate the alignment between PMSs and PMF. The lack of alignment between PMSs and PMF presents risks of inconsistency that may result in a weak PMF and PMSs, and a misrepresentation of the Department's performance.

2.2 Public Safety Performance Data Collection, Reporting and Decision-Making

As indicated by the policies and guidelines, we identified the following as the required elements for performance data collection and use:

- Data collection (identified data collection techniques, sources of data, accurate and timely gathering)

- Performance measurement information is used for reporting needs and is appropriately integrated for sound decision-making.

PS is collecting limited data against the indicators in the PMF and PMSs; consequently, there is a small amount of data available to support decision-making and reporting purposes.

PMF Data Collection, Reporting and Decision-Making

The Department has demonstrated many improvements within the performance measurement processes. While efforts were made to refine and adjust the PAA structure and align its PMF indicators, we found that the Department has not demonstrated stability in the past year to permit data collection and analysis of results.

Documentation review indicated that data for approximately 25% of the 2012-13 PMF indicators were not collected and reported in 2013-14. Furthermore, we found through the analysis of the 2013-14 PMF that a number of indicators did not have identified data sources. These indicators were then determined not measurable. The review of the Report on Plans and Priorities (RPP) and of the Departmental Performance Report (DPR) has revealed divergence between the indicators set out in the plan versus those against which results were reported. We found no evidence of rationale to support the changes made to some of the RPP indicators in the DPR of that year. The review also presented lack of consistency on the indicators at the PMF development and reporting stages. Due to these inconsistencies, we identified difficulties in ensuring integrity of the reporting process and the real status of performance measurement within the Department.

The review of the 2014-15 PMF indicated only a number of selected indicators being presented to the DMC for review of mid-year results. We found no evidence of a formal selection process or a rationale for their selection. Based on the performance documentation analysis and the review of committee agendas and records of decisions, we found that there was no status update on the complete PMF. Furthermore, we also noted that although performance information was occasionally presented at the committees, PMF concerns and issues were not fully revealed and discussed to ensure that corrective actions were taken.

At the Branch Management level, interviewees stated having established regular meetings; however, there was limited formal documentation. Many of the interviewees indicated that there was little demand from senior management for performance measurement data and/or results. Most of the performance measurement discussions that took place focused on the establishment of the indicators and identification of data sources.

PMS Data Collection, Reporting and Decision-Making

Although there was a good understanding of the PMS development processes and that 64% of the PMSs are developed and approved by the ADM, the PMSs are still at the early stage of their implementation.

Efforts were made to collect performance measurement information where possible; however, we noted that data was not collected on a regular basis. The performance information was gathered on an ad-hoc or as needed basis. The review of a sample of PMSs indicated four of 10 programs, namely Crime Prevention Program, First Nations Policing Program, Government Operations Centre and Critical Infrastructure, were collecting and using performance measurement information to support aspects of program management.

The review of PMSs and interviewees indicated that in some cases the limitation for data collection and use was also due to a need to rely on third-party information. This reliance presented a required ongoing and active engagement of stakeholders, which has presented challenges in timely data gathering. Furthermore, we noted that the reliance on third parties was not clarified and program areas were at early stages of determining the processes around third-party data collection and storage. Finally, the PMS review sample indicated limited methodology for analysis and data interpretation.

Considering the expected use of performance measurement information, we found that the Department is still at the early stage of data collection, which limits the information available for reporting and sound decision-making. The audit observations identified opportunities for promoting the use of performance measurement information and improving the identification of data source, regular data collection, analysis, and reporting. Because the Department has collected limited performance data, there is a risk of insufficient information available for sound decision-making.

2.3 Recommendations

- Assistant Deputy Minister of PACB, should ensure that:

- Alignment between indicators in the PMF, the RPP, and the DPR

- The Chief Audit and Evaluation Executive is supported to review and advise on the PMF in accordance with the TBS Directive on the Evaluation Function.

- Each Assistant Deputy Minister should:

- Identify resources assigned to development and implementation of PMSs.

- Ensure linkages between the PMF and PMSs.

- Each Assistant Deputy Minister should:

- Ensure collection of accurate data against the PMF and PMSs indicators to support timely decision-making and reporting.

- Develop agreements for third-party data collection and storage, where applicable.

2.4 Management Response and Action Plan

Management accepts the recommendations of Internal Audit. The recommendations recognize that the accountability for performance measurement is shared by all Departmental Managers.

| Actions Planned | Planned Completion Date |

|---|---|

Recommendation #1 :

|

|

PACB Contribution:

|

November 30, 2017 |

Recommendation #2:

|

|

PACB Contribution:

|

April 30, 2017 |

EMPB Contribution: |

November 30, 2016 |

CMB Contribution: |

March 31, 2018 |

CSCCB Contribution:

|

March 31, 2017. |

NCSB Contribution:

|

March 31, 2017 |

Recommendation #3:

|

|

PACB Contribution:

|

August 31, 2017 |

EMPB Contribution: |

March 31, 2017 |

CMB Contribution:

|

March 31, 2018. |

CSCCB Contribution:

|

March 31, 2017. |

NCSB Contribution:

|

April 30, 2017 |

Annex A: Internal Audit and Evaluation Directorate Opinion Scale

The following is the Internal Audit and Evaluation Directorate audit opinion scale by which the significance of the audit collective findings and conclusions are assessed.

| Audit Opinion Ranking |

Definition |

|---|---|

Well Controlled |

|

Minor Improvement |

|

Improvements Required |

Improvements are required (at least one of the following two criteria are met):

|

Significant Improvements Required |

Significant improvements are required (at least one of the following two criteria are met):

|

Annex B: Status of PMS Development by Branch

| Branch |

Developed |

Developed |

Outstanding |

Total |

|---|---|---|---|---|

National and Cyber Security |

3 |

2 |

0 |

5 |

Portfolio Affairs and Communications |

2 |

1 |

0 |

3 |

Community Safety and Countering Crime |

9 |

4 |

2 |

15 |

Emergency Management and Programs |

4 |

1 |

0 |

5 |

Departmental Total |

18 |

8 |

2 |

28 |

Annex C: Audit Criteria

| Audit Criteria |

|

|---|---|

Criterion 1: |

The Department had formally defined and communicated adequate policy and guidance to ensure the effective implementation of performance measurement. |

Criterion 2: |

Individual programs and activities had developed Performance Measurement Strategies (including engagement of appropriate stakeholders), identified the data source(s), and implemented data collection and reporting processes. |

Criterion 3: |

The PMF process engaged the appropriate stakeholders to ensure well defined and measureable performance indicators which were aligned to departmental objectives. |

Criterion 4: |

The PMF was informed by and aligned to lower level PMS processes. PMS processes and activities were further aligned to branch business plans and work plan activities to ensure the appropriate focus on the achievement of results. |

Criterion 5: |

Performance measurement information was appropriately integrated into decision-making. |

Criterion 6: |

The Department was able to report to Canadians, Parliamentarians, and Central Agencies on its achievement of results in a manner that was consistent with its objectives and program outcomes. |

Criterion 7: |

The Department had performance measurement accountability controls. |

Annex D: Preliminary Audit Risks

The following is a summary of the key risks to which PS is exposed in relation to Performance Measurement.

| Key Area |

Risk Statement |

|---|---|

Culture |

Risk that Performance Measurement methodologies and practices are not instilled in business activities. |

Engagement |

Risk that key personnel are not appropriately engaged in all aspects of Performance Measurement. |

Integration |

Risk that key performance measurement documents (e.g. PMF, PMS, Business plans) may not be aligned and outcomes and indicators may not be appropriate. |

Performance Data |

Risk that program and policy area's data monitoring activities may not be sufficient enough to challenge or validate external information required to ensure the integrity of the performance indicators. |

Use |

Risk that performance information is not utilized by key personnel to guide decision-making, i.e. resource allocations or re-alignment of project objectives. |

Reporting |

Risk that the Department is unable to provide a comprehensive story in relation to its activities to Canadians, Parliamentarians, and Central Agencies. |

Annex E: Summary of 2013 State of Annual Performance Measurement Report

The 2013 State of Annual Performance Measurement report had the main purpose of assessing the existence and completeness of the PMSs based on their above identified program and policy activity. They evaluated the completeness of the PMSs as described by TB guidance, which identified key components including Logic Models, indicators, and data collection. The report sets a Departmental baseline for future comparison; and it summarizes performance measurement gaps.

The report did not attempt to provide any conclusions as to whether those programs, which had PMSs, were implementing them and integrating them into their program management cycles. This analysis was the purpose of this audit.

The main findings of the Annual Report were:

- The state of performance measurement and accountability for approval of performance measurement strategies varies across the Department.

- The assessment found that:

- About 15% of programs require minor modifications to their performance measurement strategies.

- Close to 55%, require a moderate amount of modification.

- About 30% require significant modification.

- Most programs requiring significant change are the smaller (excluding FNPP, NCPP) transfer payment programs that have incomplete performance measurement strategies because performance measures only exist in the programs' terms and conditions; thus, essential components are absent.

- Programs did not link their program objectives to the Program Alignment Architecture.

- Linkages to foundational Cabinet documents were not clearly outlined.

- Most programs did not consider performance measurement costs, making it difficult to assess whether the performance measurement plans were realistic.

- Few programs had developed indicators for program relevance and efficiency.

- Most programs had not established targets or baselines for indicators.

- There are some general performance measurement strengths across the Department in that the performance measurement strategies:

- Contained all components required by Treasury Board guidance;

- Clearly articulated their program profiles and depicted their theoretical foundation through their logic models;

- Had developed performance indicators for their outputs and expected outcomes;

- Included indicators that were generally of good quality (valid, reliable and realistic);

- Defined roles and responsibilities for data collection, analysis and reporting; and,

- Included evaluation strategies.

Footnotes

- 1

TB Results-Based Management Lexicon

- 2

Audit opinion assessment scale can be found in Annex A

- 3

TB Results-Based Management Lexicon

- 4

TB Policy on Management, Resources and Results Structure

- 5

TB Policy on Evaluation

- 6

TB Policy on Management, Resources and Results Structure

- 7

TB Policy on Transfer Payments

- 8

TB Policy on Evaluation

- 9

PS Policy on Evaluation

- 10

TBS Directive on the Evaluation Function

- 11

TB Directive on the Evaluation Function

- 12

Audit opinion assessment scale can be found in Annex A

- 13

TB Policy on Evaluation

- 14

SPD Business Plan

- 15

TB Policy on Evaluation

- 16

PS DEC Charter

- 17

TB Supporting Effective Evaluations: A Guide to Developing Performance Measurement Strategies

- Date modified: